Political science is often described as the study of power, institutions, and collective behavior, but beneath those big themes lies a quieter, more technical engine that makes the entire discipline work. That engine is Political Methodology—the field devoted to understanding how political scientists generate evidence, evaluate claims, and build reliable knowledge about political life.

If political theory asks what justice ought to be, and comparative politics asks how states differ, political methodology asks a different but essential question: How do we know any of this? It is the discipline’s epistemological backbone, the set of tools and logics that allow scholars to move from intuition to inference, from anecdote to analysis, from observation to explanation.

And in an era defined by data abundance, algorithmic governance, and rapid shifts in political behavior, political methodology has become one of the most dynamic and consequential areas of the field.

The Core Purpose of Political Methodology

At its heart, political methodology is about making political science more precise, more transparent, and more trustworthy. It provides the frameworks that help researchers:

- Design studies that can actually answer the questions they care about

- Distinguish correlation from causation

- Evaluate the strength of evidence

- Understand uncertainty rather than hide it

- Build models that illuminate political behavior rather than obscure it

Political methodology is not just about statistics, although statistics are a major component. It is about research design, measurement, causal inference, and the philosophical commitments that underlie scientific inquiry.

It is the discipline’s way of saying: If we are going to make claims about the political world, we must be able to defend how we arrived at them.

From Early Quantification to Modern Causal Inference

The field has evolved dramatically over the past century.

The Behavioral Revolution

In the mid‑20th century, political science shifted from descriptive, historical narratives toward more empirical, data‑driven approaches. Survey research, voting studies, and early statistical models became central. Political methodology emerged as a formal subfield during this period, providing the tools needed to analyze large datasets and test hypotheses about political behavior.

The Causal Inference Turn

By the late 20th and early 21st centuries, the field underwent another transformation. Scholars began to focus intensely on causality—not just whether variables were associated, but whether one caused the other. This shift brought new tools:

- Natural experiments

- Instrumental variables

- Regression discontinuity designs

- Difference‑in‑differences

- Field experiments

- Survey experiments

These methods allowed political scientists to make stronger, more credible claims about how political processes actually work.

The Computational Era

Today, political methodology sits at the intersection of political science, statistics, and computer science. Scholars use:

- Machine learning

- Text-as-data approaches

- Network analysis

- Automated content analysis

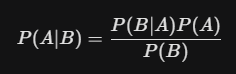

- Bayesian modeling

- Big‑data pipelines

These tools allow researchers to analyze everything from legislative speeches to social media networks to satellite imagery of conflict zones.

Political methodology has become not just a set of techniques but a culture of inquiry—one that values transparency, replicability, and methodological innovation.

Why Political Methodology Matters for Understanding Politics

Political methodology is sometimes misunderstood as a technical niche, but its influence is everywhere.

It shapes how we understand elections

From forecasting models to voter‑file analysis to turnout experiments, methodological tools help scholars and journalists interpret electoral dynamics with greater clarity.

It improves public policy evaluation

Causal inference methods allow researchers to assess whether policies actually work—whether a policing reform reduces violence, whether a welfare program increases employment, whether a civic education initiative boosts participation.

It strengthens democratic accountability

Methodological rigor helps uncover misinformation, detect gerrymandering, evaluate representation, and expose inequities in political systems.

It helps us interpret the digital public sphere

Computational methods allow scholars to analyze online behavior, algorithmic amplification, and the structure of digital political communities.

It builds trust in political science

In a time when public trust in institutions is fragile, transparent and replicable research practices help ensure that political science remains credible and publicly valuable.

The Ethical Dimensions of Methodological Power

With methodological sophistication comes responsibility. Political methodologists must grapple with:

- The ethics of experimentation in political contexts

- The privacy implications of large‑scale data collection

- The potential for algorithmic bias

- The risk of over‑quantification

- The challenge of communicating uncertainty to the public

Political methodology is not just about technical skill; it is about judgment, interpretation, and ethical stewardship of evidence.

The Future of Political Methodology

The field is moving toward greater openness, interdisciplinarity, and computational depth. Three trends stand out:

1. Transparency and Replication

Open data, open code, and preregistration are becoming standard expectations. The goal is not perfection but honesty—making research processes visible and accountable.

2. Integration with Computational Social Science

As political life becomes more digitized, political methodology increasingly draws from computer science, natural language processing, and network theory.

3. Methodological Pluralism

The future is not purely quantitative. Qualitative and mixed‑methods approaches are gaining renewed respect, especially for understanding meaning, identity, and context. Political methodology is expanding to include interpretive rigor alongside statistical rigor.

Conclusion: The Quiet Power of Methodological Thinking

Political methodology may not always be the most visible part of political science, but it is the foundation that allows the discipline to function. It is the craft of turning political questions into researchable problems, of transforming messy realities into analyzable evidence, and of ensuring that claims about the political world rest on something sturdier than intuition.

In a moment when political life is complex, fast‑moving, and often opaque, methodological clarity is not just an academic virtue—it is a democratic one.

Political methodology teaches us to ask better questions, to evaluate evidence with humility, and to understand the political world with both precision and care. It is, in many ways, the discipline’s most quietly transformative force.